Running a 24/7 Live YouTube Channel with Real-Time Translation

What Most Broadcasters Don’t Realize Until It’s Too Late

In today’s streaming environment, most broadcasters are doing a lot right.

They invest in high-quality cameras.

They deploy professional encoders.

They distribute across YouTube and social platforms.

From the outside, everything looks optimized.

But when you step back for a moment, something interesting starts to surface.

How much of your current audience can actually understand your content in their native language?

For many broadcasters, the answer is smaller than expected.

It is not uncommon to see:

- 60% or more of viewers coming from international regions

- But significantly lower engagement outside the primary language

Viewers may click. They may watch briefly. But if they cannot follow the content, they often leave quietly.

Which raises another question.

How would you even know if they couldn’t?

Most teams are not measuring this directly.

They look at views.

They look at geography.

But they are not always correlating:

- Watch time by region

- Drop-off rates

- Engagement by language

So the signal is there. It just goes unnoticed.

A Shift That’s Already Happening

We are starting to see a new type of channel emerge.

24/7 live streams that are not just broadcasting content, but continuously translating it in real time.

Captions.

Voice dubbing.

Multiple languages.

All running automatically.

One example that is worth exploring:

https://www.youtube.com/@JTVJoyas

This is the first ever live channel delivering English to Spanish captions and voice dubbing continuously on YouTube. Which means live automated translations to build their brand and support their sales around the clock to the LATAM market.

No manual intervention.

Just running.

Which raises an interesting thought.

If this is now possible… what does that mean for audience expectations going forward?

Because historically, this is how expectations shift.

Subtitles on-demand were once optional. Now they are expected.

So it is worth considering:

As more channels begin offering real-time translation… how long before audiences expect it everywhere?

And if that expectation forms…

Where does that leave channels that remain single-language?

The Idea of “Set It and Forget It”

On the surface, the concept sounds simple.

You define your input stream.

You select your output languages.

You connect your workflow.

And the system runs continuously.

But let’s take a step back.

How many “set it and forget it” systems have you seen actually stay stable over time without the right foundation?

Short tests often look perfect.

A two-hour stream runs clean.

Everything appears aligned.

But when systems run for 12, 24, or 72 hours, something different happens.

Small issues begin to surface.

Not immediately.

But over time.

What Actually Breaks in 24/7 Streaming

Bitrate Stability

At first, everything looks fine.

Then small inconsistencies begin to appear.

Bitrate fluctuations.

Buffering.

Dropped segments.

These issues rarely show up in short tests, but they often emerge in long-duration streams.

Have you ever noticed how problems tend to appear only after extended runtime?

And when they do…

How long does it typically take before someone notices?

In many workflows, especially without upstream monitoring, issues can go undetected for hours.

Which means hours of degraded viewer experience.

Multiplexing Complexity

Adding captions is one thing.

Adding multiple live dubbed audio tracks is something else entirely.

Now you are dealing with:

- Synchronization

- Timing alignment

- Stream structure over long durations

At the start, everything may feel aligned.

But over time, even small timing shifts can occur.

Subtitles lag slightly.

Dubbed audio arrives just a bit early or late.

From a technical perspective, the difference may seem minor.

From a viewer perspective, it feels off.

When multiple languages are layered into a single stream, how confident are you that everything stays aligned after hours or even days of runtime?

And if things begin to drift…

What does that feel like for someone trying to follow along in another language?

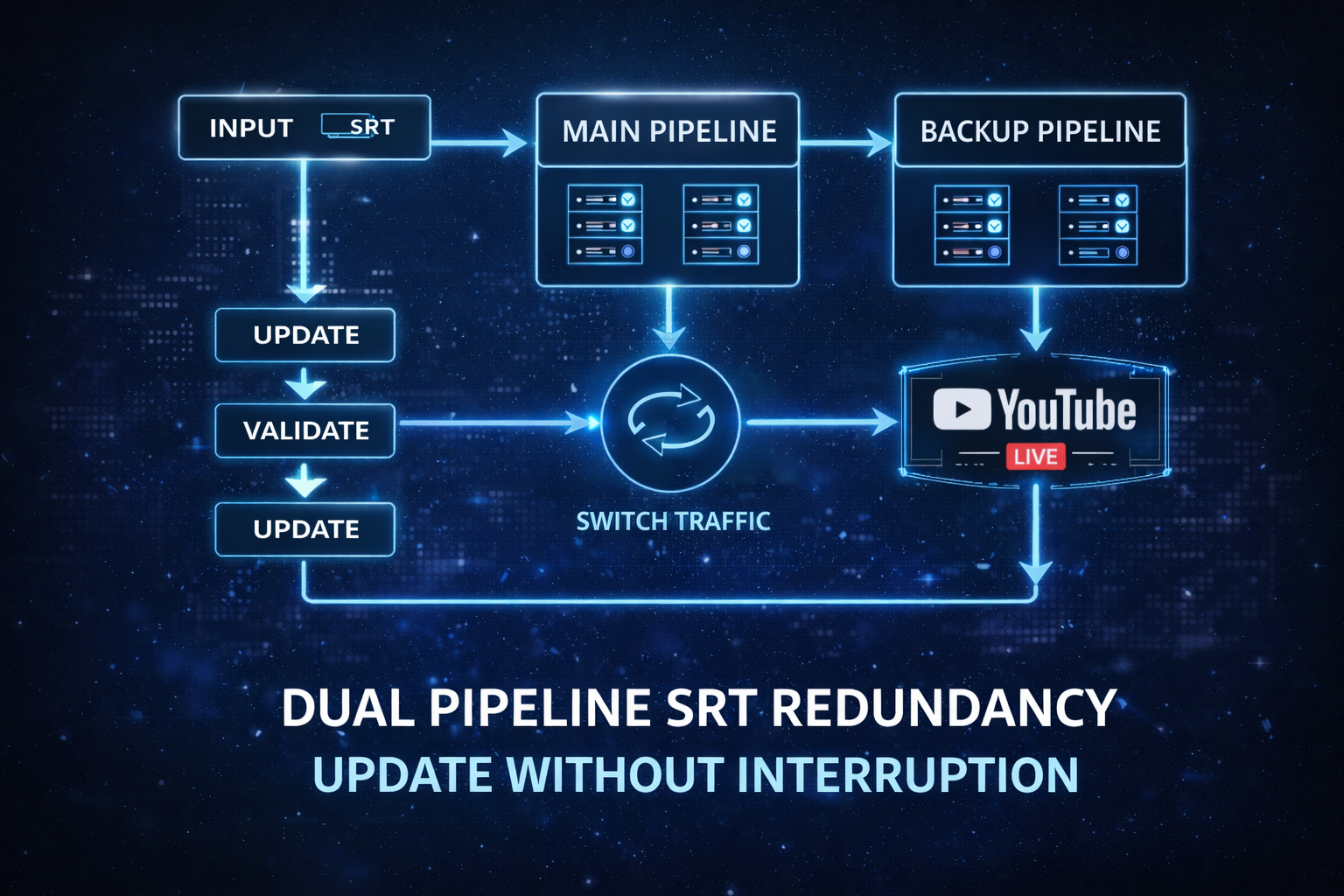

Redundancy… or Something More?

Most teams understand the need for redundancy.

Main pipeline.

Backup pipeline.

Failover protection.

That part is familiar.

But there is another side to this that is often overlooked.

Updating Without Disruption

In a 24/7 environment, your system is never truly finished.

There are always improvements:

- Better transcription accuracy

- More refined translations

- Updated prompts

- New AI models

So it raises a practical question.

When you identify an improvement, how easy is it to deploy it without affecting the live stream?

In many environments, updates are delayed.

Teams wait for maintenance windows.

Or avoid making changes altogether to reduce risk.

Which means improvements that could enhance quality today are postponed.

With a dual pipeline approach, something different becomes possible.

You can update the backup pipeline first.

Validate it in real time.

Shift traffic.

Then update the original pipeline.

No interruption.

No downtime.

If your system cannot evolve while it is live… how often do improvements get delayed?

And over time…

What does that do to the overall quality your audience experiences?

Preparation Is Where Most Quality Is Won

There is a common assumption that automation reduces effort.

In reality, it shifts where the effort happens.

ASR Dictionaries

Names.

Brands.

Domain-specific terms.

Many transcription errors are not caused by the model itself, but by missing vocabulary.

Player names.

Locations.

Industry terminology.

When these are not defined, errors repeat consistently.

When transcription issues appear in your workflow, how often are they tied to missing terminology?

And if those errors carry forward…

How does that affect translation, captions, and ultimately the viewer experience?

Translation Consistency

Without a defined glossary, translation can vary.

The same phrase may be translated differently across segments.

Slight variations.

Different wording.

Over time, this creates inconsistency.

If your messaging changes depending on the segment or speaker, what does that communicate to an international audience about your brand?

Consistency is not just about accuracy. It is about trust.

Prompting and Context

With LLM-based translation, prompts shape the outcome.

Tone.

Style.

Continuity.

Without guidance, translations may be technically correct but lack personality.

For example:

- A high-energy sports broadcast may sound flat in translation

- A news segment may lose urgency

See a recent blog that takes a deeper look at LLM extra prompts for live translation:

https://www.syncwords.com/blog/how-to-leverage-llm-prompts-for-live-streaming-translation

How much control do you currently have over how your live content feels in another language?

And if that tone shifts unpredictably…

How does that impact engagement across different regions?

Monitoring Before It Reaches the Audience

Most teams monitor what is already live.

Fewer monitor what is about to go live.

Using SRT listener ports that support multiple callers allows you to monitor translated outputs upstream.

Before YouTube.

Before distribution.

This changes visibility entirely.

Instead of reacting to issues after they reach viewers, you can identify them in real time.

How are you currently verifying translation quality before your audience sees it?

And if something goes wrong…

Do you catch it immediately, or only after viewers start to disengage?

The Experience Comes Down to Synchronization

Even accurate translation can fail if it does not feel natural.

Timing Alignment

Subtitles must match speech.

Dubbed audio must follow natural pacing.

Even a one to two second delay can feel noticeable.

When you watch translated content today, how often does timing feel slightly off, even if the words are correct?

Voice Separation and Speaker Awareness

When multiple people are speaking:

- Who is talking matters

- How they sound matters

Without speaker-aware dubbing:

- All voices may sound the same

- Conversations become harder to follow

With speaker detection:

- Each voice is distinct

- Dialogue feels natural

If all translated voices sound the same, how easy is it for a viewer to stay engaged during a conversation?

And if it becomes difficult to follow…

How long do they stay before moving on?

End-to-End Timing

Synchronization is not one component.

It is the entire chain.

Video.

Audio.

Subtitles.

Dubbing.

All aligned.

Breakdowns often occur between systems rather than within them.

A delay in ASR.

Buffering in translation.

Encoder misalignment.

Where in your current workflow is timing most likely to break down?

And if it does…

How visible is that issue to your audience?

What This Means Going Forward

We are entering a phase where continuous multilingual streaming is no longer experimental.

It is operational.

https://www.youtube.com/@JTVJoyas

So it becomes less about whether this is possible.

And more about timing.

Early adopters are already building global audiences.

Others are still evaluating.

As more channels begin offering real-time translation… how long before audiences start expecting it as the norm?

And if that expectation forms…

What happens to channels that have not adapted?

Not overnight.

But gradually.

Viewers explore alternatives.

Engagement shifts.

Growth slows.

Often without a clear signal as to why.

A Final Thought

If your channel is already running 24/7, the infrastructure is in place.

The question is not whether you can stream continuously.

It is something else.

How many potential viewers are experiencing your content today but not fully understanding it?

Because in many cases, they are already finding you.

They are just not staying.

And if that continues…

What does that mean for your growth over the next 12 to 24 months?

If you are exploring what a fully automated multilingual workflow could look like in your environment, it may be worth taking a closer look at how these systems are being deployed today.

Sometimes the biggest shift is not in the technology itself.

It is in recognizing what has already changed.